We present a semi-automatic technique for computing surface correspondences between 3D facial scans in different expressions, such that scan data can be mapped into a common domain for facial animation. The technique can accurately correspond high-resolution scans of widely differing expressions -- without requiring intermediate pose sequences -- such that they can be used, together with reflectance maps, to create high-quality blendshape-based facial animation. We optimize correspondences through a combination of Image, Shape, and Internal forces, as well as Directable forces to allow a user to interactively guide and refine the solution. Key to our method is a novel representation, called an Active Visage, that balances the advantages of both deformable templates and correspondence computation in a 2D canonical domain. We show that our semi-automatic technique achieves more robust results than automated correspondence alone, and is more precise than is practical with unaided manual input.

A key component in our system is an active visage: a proxy for representing corresponded

blendshapes. While conceptually similar to a deformable template, a key difference is that

a deformable template deforms a 3D mesh, while an active visage is constrained to the

manifold of the non-neutral expression geometry, and hence only deforms the correspondences

(this implies no 3D error).

We have developed a modular optimization framework in which four different forces act on

these active visages to compute accurate correspondences. These four forces are:

While fully-automatic techniques exist, they often fail on difficult cases (e.g., extreme expressions),

requiring one to handle such cases manually. However with a manual approach it is impractical to

precisely align fine-scale details (such as skin pores), as seen in Figure 2. Alignment of such

features is important if high-resolution detail maps are to be blended seamlessly, without

introducing ghosting artifacts.

In our approach the artist and computer interact: the artist guides the optimization, as needed,

through difficult or ambiguous situations; and the computation refines the optimization on the

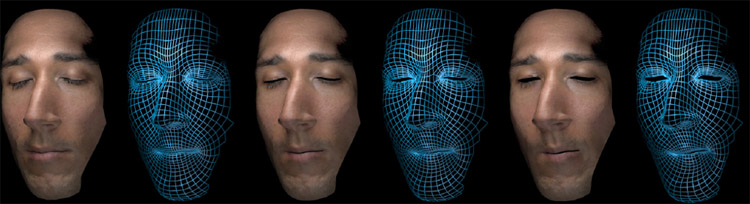

local scale (Figure 2). Figure 1 shows a blend between two expressions which have been

corresponded using our technique. The correspondences obtained with this technique enable

us to convincingly blend high-resolution maps. See the video for additional examples.