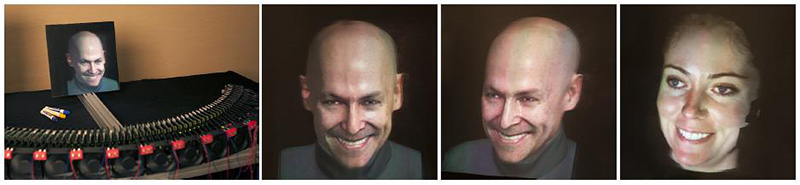

Projectors are rapidly shrinking in size, power consumption, and cost. Such projectors provide unprecedented flexibility to stack, arrange, and aim pixels without the need for moving parts. We present a dense projector display that is optimized in size and resolution to display an autostereoscopic life-sized 3D human face with a wide 110 degree field of view. Applications include 3D teleconferencing and fully synthetic characters for education and interactive entertainment.

Our display is built from off-the-shelf components. Our projector array system consists of 72 Texas Instruments DLP Pico projectors each of which has 480x320 resolution. Our projectors are spaced along a 124cm curve with a radius of 60cm. We removed the cases and built custom mounts to place the projectors just 14mm apart with a 4mm lens. The projectors are all aimed at a 30cm x 30cm vertically anisotropic screen. The ideal screen material should have a wide-vertical diffuse lobe so the 3D image can be seen from multiple heights and narrow horizontal diffusion to preserve horizontal divergence. We use the horizontal cylindrical ridges of a plastic 40 line-per inch lenticular screen to scatter the light from each projector lens as a vertical strip of light. To eliminate any gaps between stripes, we add a 1° horizontal by 60° vertical light-shaping diffuser sheet from Luminit. The total width of the horizontal diffusion should be equal to the angle between projectors. Our setup provides a high angular resolution of 1.66 degrees between views, achieving not only binocular stereo but also compelling motion parallax.

We drive our projector array using a single computer with twenty-four 1920x480 video outputs from four AMD FirePro W600 Eyefinity graphics cards. We then split each video signal using a Matrox TripleHeadToGo box into three 640x480 outputs, yielding the 72 projector signals. We adapted the multiperspective vertex shader technique of Jones et al. [2007] to work with fixed projector arrays and different display surface shapes. For a given mirror shape and diffusion profile, we approximate the reflected projector positions and use them to warp vertex positions to generate multiple-center-of-projection (MCOP) images. We project a series of AR toolkit markers from each projector to calibrate the display geometrically and photometrically. Our rendering algorithm can be used with other form factors including both front and rear projection with flat and curved screens.

Our display achieves autostereoscopic horizontal parallax without lag. For faces which can make eye contact, vertical parallax is also important. We update vertical perspective by detecting the viewer head positions using a Microsoft Kinect. Given the height and distance of the multiple viewers, we warp the multiperspective rendering according to who will see each column of projected pixels.

In a collaboration with Activision Inc. we demonstrate Digital Ira: a real-time facial rig incorporating expression blending and physically-based eye and skin shaders [Alexander et. al. 2013]. The rig is based on multiple high-resolution Light Stage scans [Ghosh et al. 2011]. The actor's performance clips were captured at 30 fps under flat light conditions using a multi-camera rig. An offline performance tracking algorithm was used to generate corresponded geometry and per-frame blendshape weights. Mesh animation is transferred to standard bone animation on a game-ready 4k mesh using a bone-weight and transform solver. Surface stress values are used to blend albedo, specular, normal, and displacement maps from the high-resolution scans per-vertex at run time. DX11 rendering includes SSS, translucency, eye refraction and caustics, and a physically based two-lobe specular reflection. Even with all these effects, we can render 72 dynamic views of the face simultaneously.